Why your AI needs a receipt

When AI handles your money, health and laws, blind faith isn't enough. Here is the Bittensor subnet solving the invisible crisis.

I have a dad friend from daycare who recently welcomed his second child.

If you’ve ever had a newborn, you know the specific kind of brain fog that sets in around week three. You are operating on two hours of broken sleep, caffeine, and pure survival instinct.

In this zombie state, my friend drove to our local grocery store. He wandered the aisles in a daze, filled his cart with diapers, milk, and frozen dinners, and walked out to his car. He loaded the bags, drove home, and started unpacking.

Halfway through putting the milk in the fridge, there was a knock at the door.

It was the police.

In his fog, he had completely bypassed the checkout. He hadn’t paid. He wasn’t trying to steal; his brain just skipped a step. But to the store’s security system, there was no difference between a tired dad and a shoplifter.

Here, the only difference that matters in the eyes of the law is a slip of paper about four inches long.

The Receipt.

We usually think of receipts as trash. But that piece of paper is a “Proof of State.” It proves a transaction happened exactly as claimed. Without it, my friend was technically a criminal. With it, he would have been a customer.

Right now, the $2 trillion AI industry is operating like my sleep-deprived friend.

When you ask an AI model to diagnose an X-ray, or when you ask a trading bot to manage your crypto portfolio, the process happens inside a black box. You put an input in and you get an output out.

But just like my friend walking out of the store, we have no proof of what happened in the middle.

How do you know the AI used the specific medical model it claimed to use?

How do you know the trading bot actually executed the strategy you paid for?

How do you know your private data wasn’t siphoned off?

Currently, AI runs on a philosophy of blind faith.

That works fine for writing poems. It does not work when we start building the future of finance and healthcare.

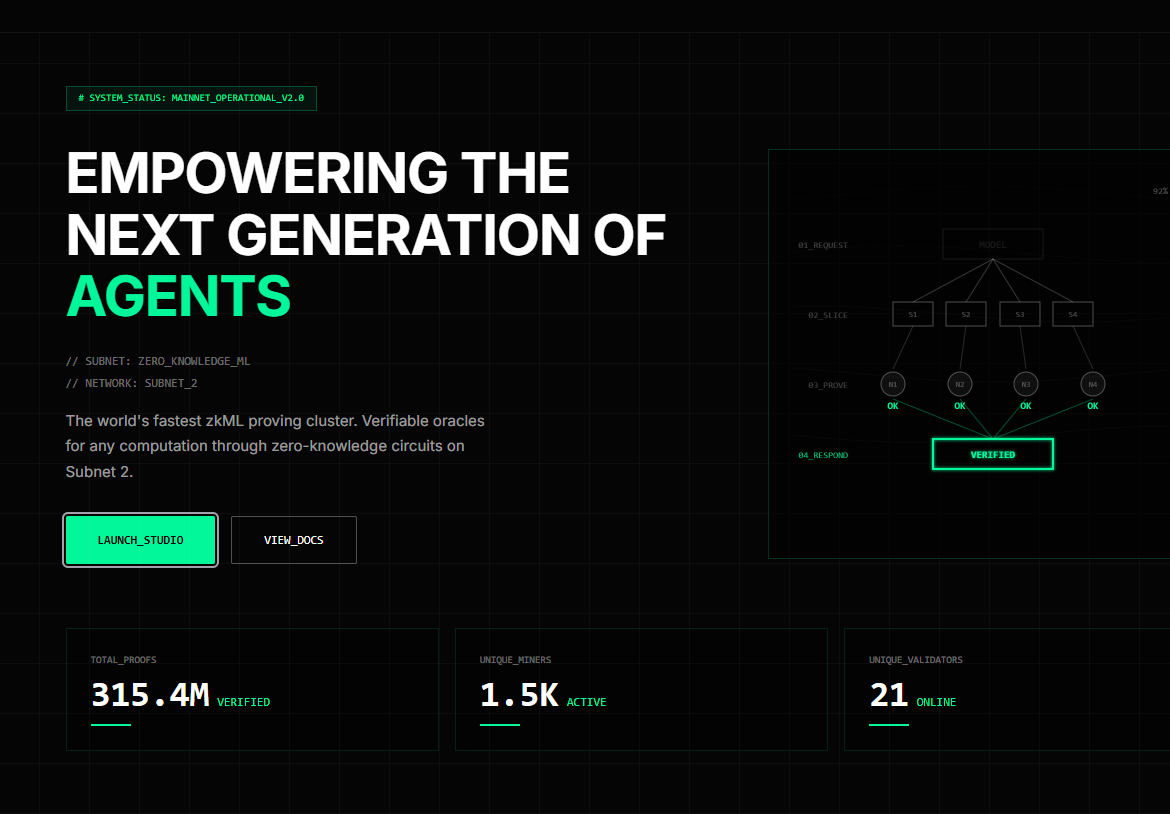

Today, we are looking at DSperse (formerly Omron), Subnet 2 on Bittensor. They are building the receipt printer for the AI age.

The Invisible Problem

To understand why DSperse is critical, we have to look at where AI is going.

We are transitioning from the Chatbot Era to the Agentic Era.

In the Chatbot Era, the stakes were low. If ChatGPT hallucinated a fact about the Roman Empire, it was annoying, but nobody got hurt.

But then came the rise of AI Agents.

An AI Agent is fundamentally different from a chatbot. It is autonomous software designed not just to talk, but to do. It breaks out of the text box to integrate with your environment; executing shell commands, controlling browsers and managing files without human intervention.

Agents represent the shift to active AI.

They don’t just suggest an action; they take control of your computer.

They can move a mouse, navigate to a decentralized exchange, and execute a trade.

This shifts the paradigm completely.

Chatbot Era: “Write me an email about buying Apple stock.” (Low Risk)

Agentic Era: “Here are my API keys. Go buy $5,000 of Apple stock.” (High Risk)

If I am going to let a decentralized AI agent manage my savings, I cannot rely on blind faith. I need proof. Currently, the industry offers two imperfect options:

1. The “Black Box” Model (Blind Faith)

We trust Anthropic or Google because they are massive corporations. But we have no actual proof of what is happening inside the box. We just hope they aren’t manipulating the results.

2. The “Glass House” Model (Total Exposure)

The alternative is Open Source. To prove a model is honest, the developer shows their code.

The Problem for a business here is, that code is their “secret sauce.” If a hedge fund reveals their proprietary model just to prove it works, they lose their competitive advantage.

This is the deadlock.

Businesses can’t afford to give away their secrets (Option 2). Yet, users won’t accept blind faith (Option 1) when their bank accounts are on the line.

We need a third option. We need a way to verify the result without exposing the secret.

The Solution (The “Secret Recipe”)

The solution is a technology called zkML (Zero-Knowledge Machine Learning).

Let’s bring this back to my neighborhood.

There is a local bakery that makes incredible malt loaf. The owner claims it uses three specific organic grains and a signature white sourdough starter. That’s why he charges $12 a loaf.

How do I verify he is telling the truth? For all I know, he uses generic supermarket flour.

The “Black Box” Solution:

I just buy the bread and hope I’m not getting ripped off. (This is how we currently treat AI).

The “Glass House” Solution:

I stand in his kitchen and watch him bake. The problem is if I watch him, I’ll learn his recipe and open a competing bakery next door.

The “DSperse” Solution:

Imagine the baker baked his bread inside a transparent, locked glass box containing a Digital Inspector who watches every step.

This inspector scans the flour barcodes and weighs the starter. When the bread pops out, the box prints a Certificate. The Certificate says: “I certify the secret steps were followed (99.9% confidence)”

The certificate proves the process without revealing the recipe:

I (the customer) get verification that I wasn’t scammed.

The Baker (the developer) keeps his secret recipe safe.

This is what DSperse does for AI. It acts as that Digital Inspector, generating a cryptographic receipt that proves a computation happened correctly without revealing the proprietary code.

The Bittensor Angle

If this technology is so revolutionary, why doesn’t ChatGPT come with a receipt?

Because generating these receipts is incredibly heavy.

Creating a Zero-Knowledge Proof is computationally expensive. Historically, proving a simple AI inference took 1,000x to 10,000x more computing power than just doing the inference itself.

If an AI takes 1 second to give you an answer, it might take 1,000 seconds to generate the receipt. That is too slow for the real world.

This is where the magic of Bittensor comes in.

The team behind Subnet 2, Inference Labs, realized a single centralized server would never be fast enough. So, they built DSperse to act as a distributed supercomputer.

Instead of asking one computer to lift a 1,000-pound rock, DSperse breaks the rock into a thousand 1-pound pebbles. It slices the proof generation into tiny segments and distributes them across the Bittensor network.

Hundreds of miners compete to verify these segments. Because there is money (TAO) on the line, miners have started a hardware arms race, building custom chips specifically designed to generate these proofs faster than anyone else on earth.

Subnet 2 has already generated over 300 million proofs as of February 2026, doing at scale what academic researchers said was too impractical just a few years ago.

Real-World Stories

Technology is only interesting if it solves human problems.

The Trustless Hedge Fund (Vanta SN8)

If I ask you to invest in my AI trading bot, and I show you a spreadsheet of 300% APY returns, how do you know I didn’t just type those numbers into Excel?

In the traditional world, you hire an auditor like Deloitte to come in, check the books, and stamp a document. That takes six months and costs a fortune.

By integrating DSperse’s proof generation, Vanta (SN8) creates a mathematical “audit trail” for every trade the AI makes.

It proves the trade was executed by the specific model you subscribed to and it proves the returns are real without Vanta having to reveal their proprietary trading strategy to the world. This is the infrastructure Vanta is building right now to create the first truly verifiable hedge fund.

Verifiable Vision (Score SN44)

In early February 2026, Score (SN44) and DSperse (SN2) announced a major partnership to solve a critical problem in computer vision.

Score specializes in analyzing video feeds in real-time. Think of security cameras at airports, sensors on oil rigs, or tactical cameras in football stadiums (like their work with Reading FC).

But in high-stakes industries like aviation, you can’t just trust an AI when it says “The runway is clear.” If the AI is wrong, people die. Regulators like the FAA and NAV Canada require an audit trail. They need proof.

DSperse proves that the AI actually analyzed the specific video frame it claimed to, and the output (e.g., “Runway Clear”) was generated by the certified model, not a glitch.

The Receipt Economy

The vision for Subnet 2 is that DSperse becomes the “SSL Lock” of the AI internet.

Ask anyone over 30 about the early web and they’ll tell you they were terrified to put their credit card into a website until they saw HTTPS and that little green lock icon. That icon meant: This connection is secure.

DSperse wants to put that lock icon on AI.

In a world where AI agents will negotiate your mortgage, manage your retirement, and draft your contracts, the question is: “Who owns the truth?”

Right now, OpenAI and Google own the truth. They say their model did X, and you’re expected to believe them because they’re big.

Bittensor (and specifically DSperse) is building something different: a world where the truth is owned by no one, but verifiable by everyone.

In the future, when you’re browsing a marketplace of AI agents—looking for a lawyer, a financial advisor, or a co-pilot for your business—you won’t look for the biggest brand name. You’ll look for the “Verified by Bittensor” checkmark.

Which brings me to a question for you:

If you had that checkmark today, an agent with absolute mathematical certainty, what is the first high-stakes task you would hand off to it?

Would you let it file your taxes? Rebalance your crypto portfolio? Diagnose a medical scan? Reply and let me know.

Because in the end, we don’t need more powerful AI. We need accountable AI.

And accountability starts with a simple piece of paper.

The receipt.

Until next time.

Cheers,

Brian

Disclaimer: This is not financial advice. I am a writer documenting the Bittensor ecosystem. Always do your own research.

I have a question even if we get these receipts and we find out that there is a mistake but it’s in one of the subnet and they made the mistake. How would we get our TAO back? Who would we talk to or complain to it? Say you lost everything for no apparent reason it’s like you say it’s like going into a mall and you have 128 different stores and you buy the product but there’s a mistake on the price and you go back to the customer service to correct it because it happens all the time in the stores and use computers. Who do you talk to? That’s the next part of the equation? I’m sure eventually you will be able to go to somebody or some AI to correct it hopefully in the future soon