The 4 Layers Of Crypto AI (And Where TAO Actually Lives)

Stop comparing Bittensor to NEAR: They're playing different games on different floors of the same building.

It was Saturday afternoon. I was refereeing two boys fighting over who gets to turn on the tap for the garden hose.

My phone buzzed. I got distracted, and the younger one ended up soaked.

A friend, also a TAO (and other cryptos) holder, texted in our “Crypto School” group chat: “Bro, what do you think of NEAR? 1M TPS, 46M monthly users, AI agents on the consumer wallet. This is getting real traction. Should I sell some TAO and buy NEAR?”

Quick disclosure before we go further: I’ve been aware of NEAR for years, long enough to have lost a pretty penny on NEAR NFTs in 2021, hoping to ride the next Solana boom. Now I’m a proud owner of NEAR Tinker Union and Antisocial Ape Club, which is to say I own priceless lessons on wasting money.

I get one version of this question every few weeks:

Should I be in TAO or NEAR? Or Render? What about ICP? Isn’t ASI an AI thing too?

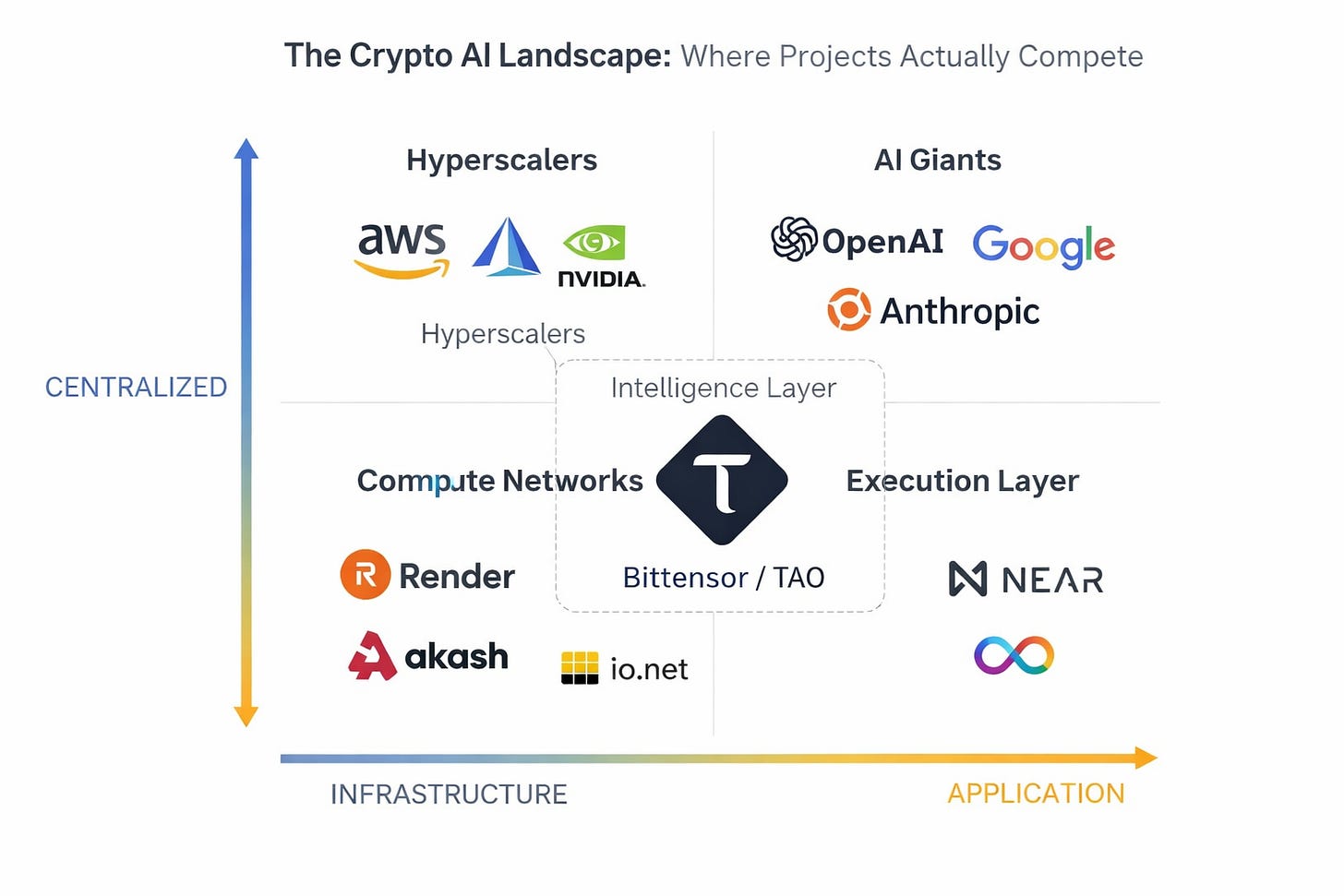

The honest answer is: those projects aren’t competing for the same slot in your portfolio. They sit at different layers of the same stack.

Most “crypto AI landscape” pieces collapse them into a single horse race, and that’s how readers end up holding three accidental bets on the same layer and zero on the others.

So after the garden clean up job, I sat down with my coffee and answered the only way I know how.

With a map and a framework.

The Four Layers of Crypto AI

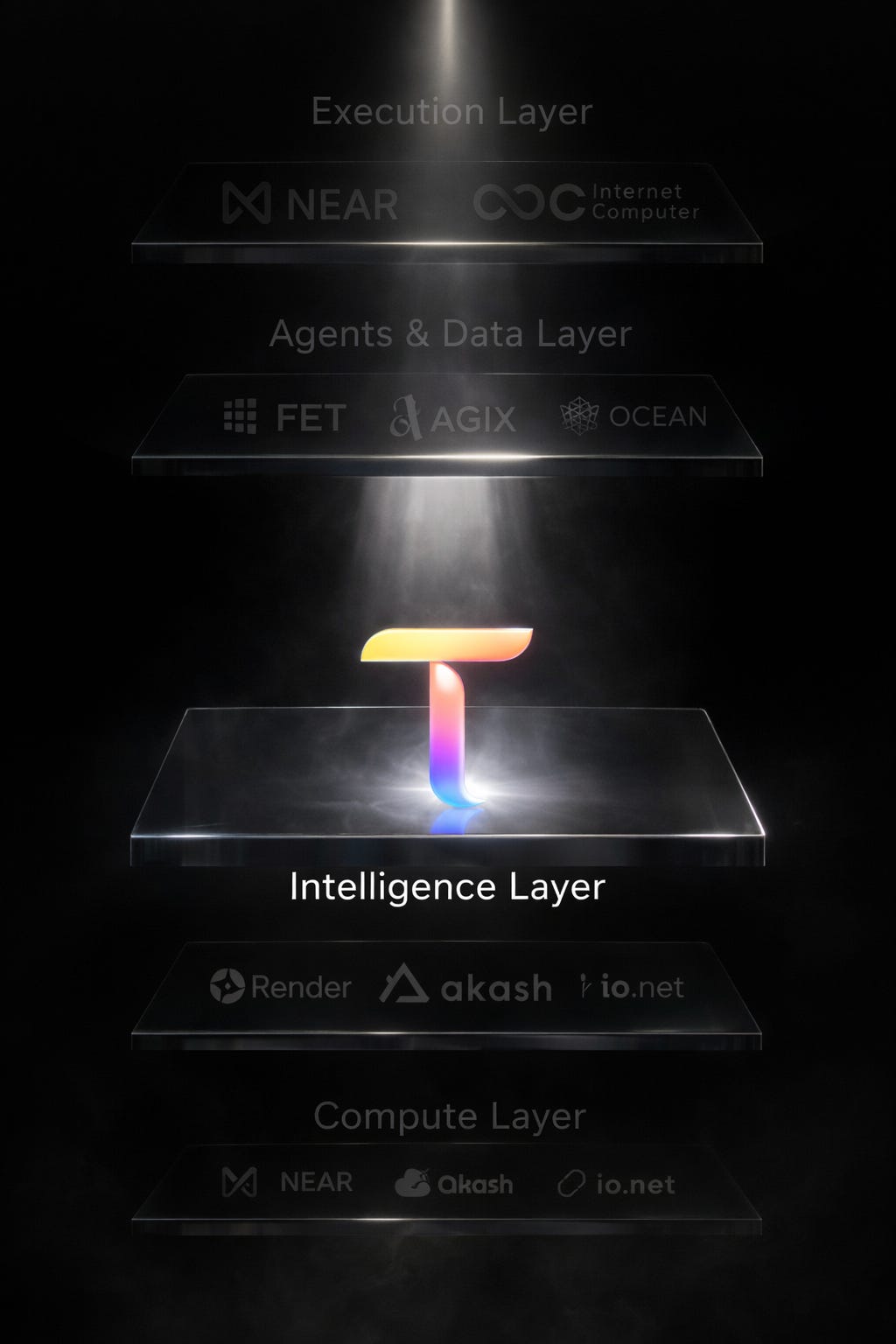

The AI economy is increasingly splitting into four layers: Compute, Intelligence, Agents & Data and Execution/UX. Each layer is seeing a centralized stack dominate today while decentralized networks are emerging to compete on incentives, access, and ownership.

Read it down, not across. A bet on Render isn’t a substitute for a bet on TAO. A bet on TAO isn’t a substitute for a bet on NEAR. They could all win or lose.

Let’s walk through each layer.

Compute is the rented muscle

Render started as a decentralized GPU marketplace for 3D rendering and has aggressively expanded into AI inference and training workloads. Akash runs a “decentralized cloud” model: anyone can rent out spare compute. io.net aggregates GPUs on Solana. Nosana plays a similar game.

The pitch is the same across all of them: cheaper than AWS, more resilient, no single point of failure. The reported cost savings vs. centralized clouds run in the 50–80% range depending on workload, which is real money for AI startups burning through inference budgets.

This layer is the picks and shovels of crypto AI.

Where they win: Cost, access and resilience.

Where they lose: Frontier-scale training. The hyperscalers still have tighter GPU supply relationships and the kind of integrated networking you need for the biggest runs. Decentralized compute is best positioned for marginal workloads like inference, fine-tuning, edge deployment, or rendering pipelines.

Intelligence is where Bittensor lives alone

This is the part most readers underweight when they map the space.

Bittensor is the most established crypto network explicitly trying to build decentralized markets for model production at scale.

NEAR and ICP are more execution and app-layer plays, Render rents compute, and ASI is more about agent coordination and data infrastructure.

Bittensor is different. Miners compete to produce valuable model outputs, validators score them, and subnet economics determine where emissions flow.

With dTAO, that incentive system has become more market-driven, which is why I think of it as Proof of Intelligence: AI production coordinated by an open economy of 128 subnets rather than by one company’s balance sheet.

Where it wins: Specialization, open competition and economic alignment between the people who build the AI and the people who use it.

Where it loses: Onboarding. It is lacking the kind of seamless UX that makes ChatGPT effortless for someone who’s never opened a terminal.

I’ll come back to Bittensor in more detail in the spotlight section. The point for now: it’s the only one of the projects on this map that’s playing the intelligence game.

Agents and data are the ASI Alliance bet

The Artificial Superintelligence Alliance (ASI): Fetch.ai, SingularityNET, and Ocean Protocol who all merged under the FET ticker; are the full-stack agent and data play. Fetch handles autonomous agents that can negotiate and execute tasks, SingularityNET runs an AI services marketplace and Ocean handles tokenized data exchange.

The vision is good, but the execution is more fragmented than the marketing suggests and the merger is still in the awkward phase where three projects are operating under one banner without fully feeling like one stack. Its worth watching, but not yet a thesis I’d build a portfolio around the way I would around the intelligence layer.

Where it wins: Vertical integration. Owning agents, services and data in one stack is genuinely rare in crypto AI.

Where it loses: Coherence. The merger is still bedding in and you can feel the seams.

Execution/UX Layer: Where AI Apps Actually Live

NEAR is the most practical of the bunch. It’s an “AI-ready” L1 with developer tooling that lets you build with natural-language prompts, generate contracts and ship dApps without being deep in the weeds. Its high throughput, user-friendly and offers solid grants for AI builders. NEAR is trying to be the place where AI-powered applications get used.

Internet Computer (ICP) plays a different game. It runs AI models and agents fully on-chain via canisters, with ICP burned for compute cycles. This is total verifiability and sovereignty: nothing is off-chain, and everything is trustless. That makes it heavier infrastructure, but powerful when on-chain history and execution really matter.

Where they win: On-ramps. Both projects make AI-powered dApps actually usable.

Where they lose: Neither is producing intelligence. They’re hosting the apps that consume it.

One non-crypto note worth flagging

The most important decentralized AI player on the planet doesn’t have a token at all.

Hugging Face hosts more than two million open-source models. It’s the GitHub of AI.

It’s where Llama, DeepSeek, Qwen, and most of the open-weight world get distributed. Multiple Bittensor subnets host their models there too. Anyone can pull weights, fine-tune on their own hardware, and ship a product without ever touching a crypto network.

Why does this matter for your TAO thesis? Because it’s the alternative path.

Open-source AI is closing the gap with closed-source faster than most retail crypto investors realize. Bittensor’s value at the model layer has to clear a bar set by free, open weights you can run locally. Sometimes it does, when specialization, incentives, and economic alignment matter. Sometimes it doesn’t.

Knowing where Hugging Face fits on your map is part of being honest about where TAO fits.

Bittensor Subnet Spotlights

Three live subnets worth knowing by name, because “Bittensor subnets” as a concept is too abstract for most people to act on.

Chutes (SN64). Serverless inference. You hit an API, a subnet routes to the cheapest available miner, you get a response. The closest thing to a drop-in replacement for the OpenAI API that exists in crypto AI today. If you’ve ever wanted to test what decentralized inference actually feels like, this is the one to start with.

Apex (SN1). The flagship language-model subnet with agentic workflows, tool use, and chain-of-thought reasoning built to push inference quality past what single open-source models like Llama or Mistral can deliver alone. Clean SDK and API, explicitly positioned for builders. If Chutes is the fastest way to experience decentralized inference, Apex is the fastest way to build on it.

Teutonic (formerly Templar) (SN3) and the broader training subnets. Decentralized training of large models: the kind of work that produced the 72-billion-parameter run earlier this year that outperformed older Llama baselines. The governance picture around this layer of subnets has been turbulent in 2026, but the infrastructure work is still real and still worth tracking.

These three are a starting point and by no means a complete list. The point is that “subnets” stops being abstract once you’ve used one.

Two frameworks you can use this week.

Framework one: how to evaluate any crypto AI project

Three questions, in order:

What layer does it sit at? If you can’t place it on the map cleanly, that’s a flag.

Does the token actually do work? Is it required for the network to function, or is it bolted on as a pretend governance token?

Is anyone using it? Look for real revenue, usage and workloads. Not pitch decks.

If a project clears all three, it’s worth deeper diligence. If it clears two, it’s worth watching. If it clears one or zero, it’s a vibe.

Framework two: no-code entry points

You don’t need to run a validator to participate. The simplest way to use Bittensor is to query a subnet. Chutes is one of the easiest on-ramps. I’m working on an upcoming walkthrough to demonstrate just how easy this can be.

The simplest way to participate economically is to stake TAO on a subnet whose work you believe in, which under dTAO directs emissions toward that subnet. Both can be done from a wallet without a terminal.

What this looks like in real life

A freelance writer today pays Anthropic $20–200 per month for drafting and fact-checking. Tomorrow: they query Apex (SN1) directly for comparable quality at lower cost (no corporate margins). If performance slips, they simply switch subnets with no lock-in or surprise hikes.

Apex is live today with working APIs and products, the main friction is awareness.

Or, picture a small business owner building a customer service agent: ASI coordinates the logic, Apex (SN1) powers the language model and NEAR handles the interface. No single vendor owns the stack.

Your compass from here

Crypto AI isn’t one bet. It’s at least four. The projects you’ve heard lined up against each other are doing different jobs.

The smart move is to know which ones you’re exposed to and why: Compute, Intelligence, Agents and Execution are different layers with different roles to play.

Bittensor’s edge isn’t that it’s the only AI token. It’s that it’s the only one playing the model game, coordinating the production of intelligence itself, not renting the hardware or hosting the apps.

If you’ve never actually used a subnet, do it this week. Start with Chutes. Then come back and tell me if the intelligence layer still feels abstract.

Until next time.

Cheers,

Brian

Disclaimer: This is not financial advice. I am a writer documenting the Bittensor ecosystem. Always do your own research.